“Certified Fresh on Rotten Tomatoes” seems like the go-to marketing campaign line for most films, big and small, these days, but what does that little number on the “Tomatometer” exactly mean? The way tomato scores have now been thrown around so loosely has created many misconceptions on what they really stand for, and it’s only gotten more skewed as more review aggregators have risen to prominence. From rotten splats to letter grades to precise numerical values, moviegoers’ main sources for film criticism are now offering wider variety.

Although this, of course, leads to more confusion and skepticism towards the validity of such ratings. “How can the CinemaScore grade be so high when it’s not even Certified Fresh?” The examples go on and get more tiresome. Well, If you’ve ever wondered where the letter grades on CinemaScore come from, or how Metacritic does their own calculations with film reviews, then you’re in luck for we at DiscussingFilm have broken it all down. And if you already think you know the answer, the truth may be a bit more complicated than you would expect. Discover the ins and outs of Rotten Tomatoes, CinemaScore, Metacritic, and IMDB ratings below, and resolve any misconceptions you may have from the major review aggregators.

Rotten Tomatoes

Rotten Tomatoes needs no introduction; everyone’s familiar with the infamous Tomatometer. So what does that number mean? Let’s dive in.

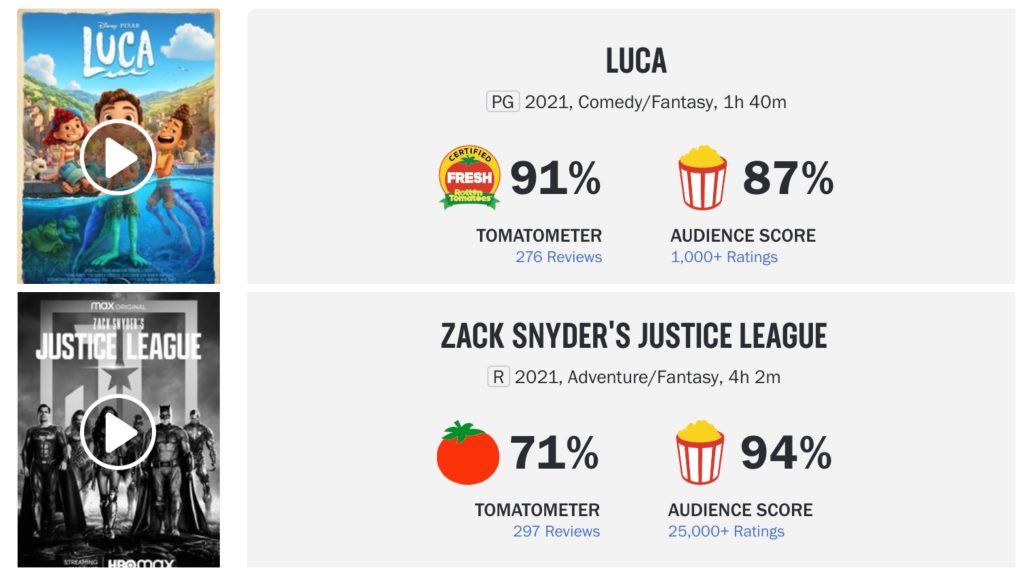

When on any given film’s Rotten Tomatoes page, you are presented with two percentages, the Tomatometer and the Audience Score. This differentiation is important.

Tomatometer

To the left, the Tomatometer is accompanied by either a “Fresh” tomato, a normal tomato, or a splattered one based on its score. This number is not a rating, but an average measure of enjoyment; it is the percentage of positive reviews from approved critics. Unless these critics self-submit their reviews, it is up to specially hired curators to collect and tally the number of Fresh or Rotten reviews in order to generate Tomatometer scores.

In order to be “Certified Fresh,” a film must have a score consistently above 75% and have at least 80 reviews for wide releases or 40 reviews for limited releases. The overall total (regardless of rating) must also include five or more reviews from the site’s Top Critics, who are described as “well-established, influential, and prolific.”

Unless it meets the above criteria, the Tomatometer will display a red tomato until its score falls below 60%, at which point it is considered Rotten.

Audience Score

The Audience Score, similarly, is the percentage of positive reviews from audiences. For films in theaters, Rotten Tomatoes will ask users to verify ticket purchases before submitting a rating in order to create “Verified Ratings,” which are less vulnerable to spam reviews. An “All Audience Score” consists of all ratings with or without a verified ticket purchase, and as such can be susceptible to fraudulent reviews, made to artificially inflate or deflate a film’s score.

For calculating the Audience Score, Rotten Tomatoes considers reviews over 3.5 to be positive, and those beneath it to be negative.

Like the Tomatometer, shows or films with 60% positive reviews will display a full popcorn bucket, and a tipped over bucket when it falls below that threshold. Audience Scores are only available for films that are publicly available and have “enough ratings to generate a score.”

For more information on the Tomatometer, click here.

CinemaScore

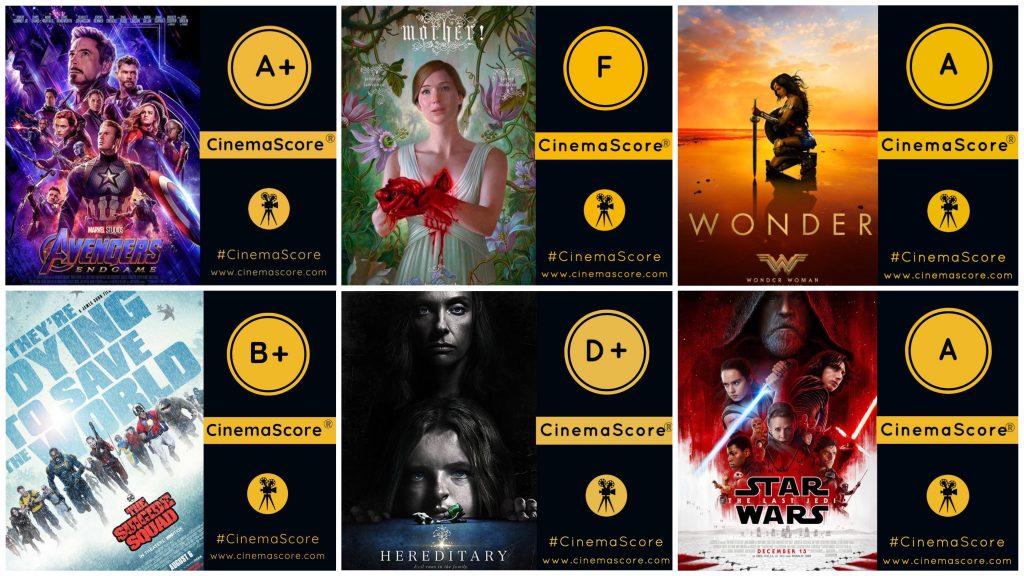

Founded in 1978, CinemaScore is still among the most popular measures for feeling out the audience reaction to a movie. Most commonly seen across social media platforms as a slick graphic with a letter grade beside a movie poster, many have never stopped to question where that grade comes from:

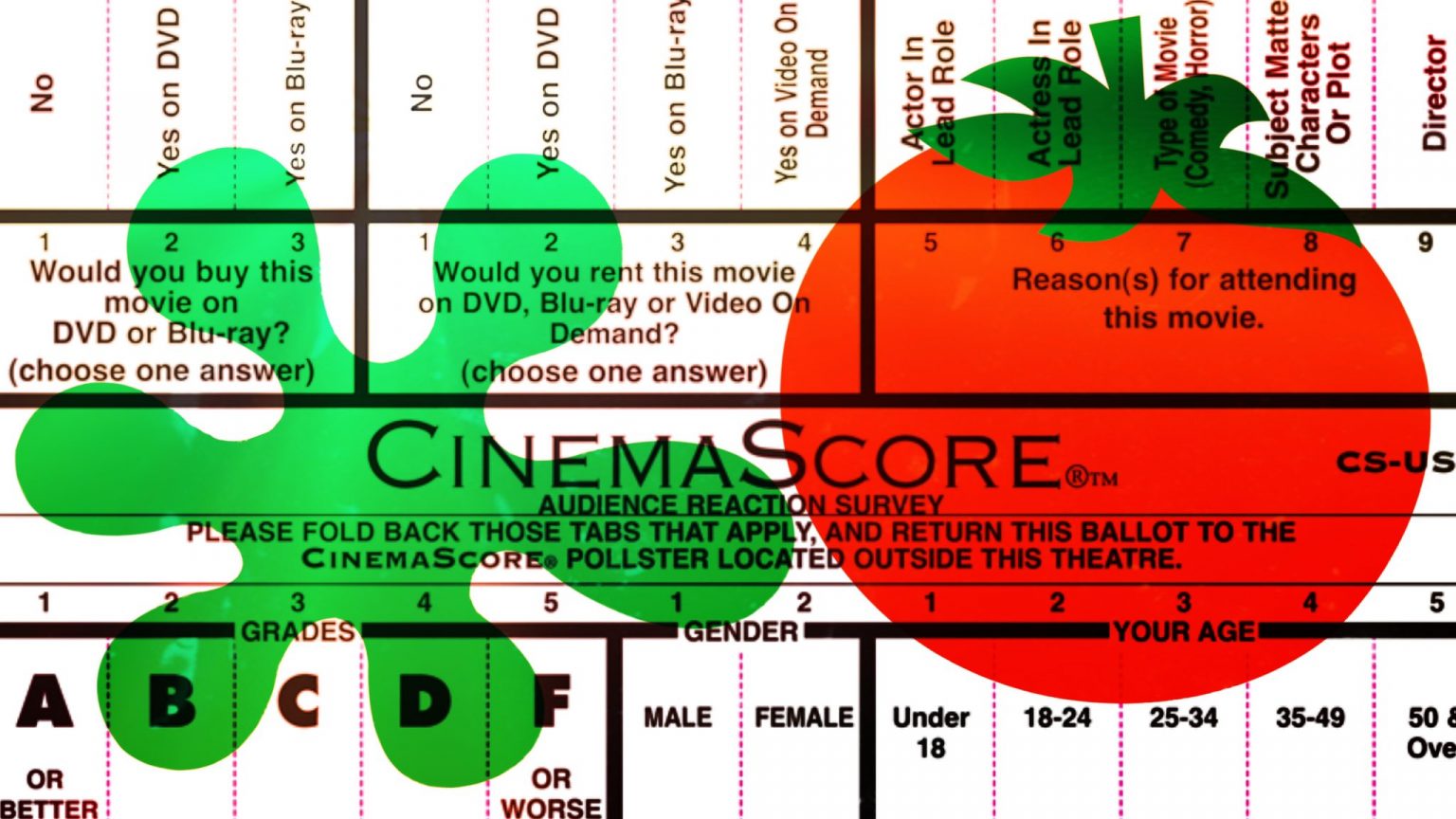

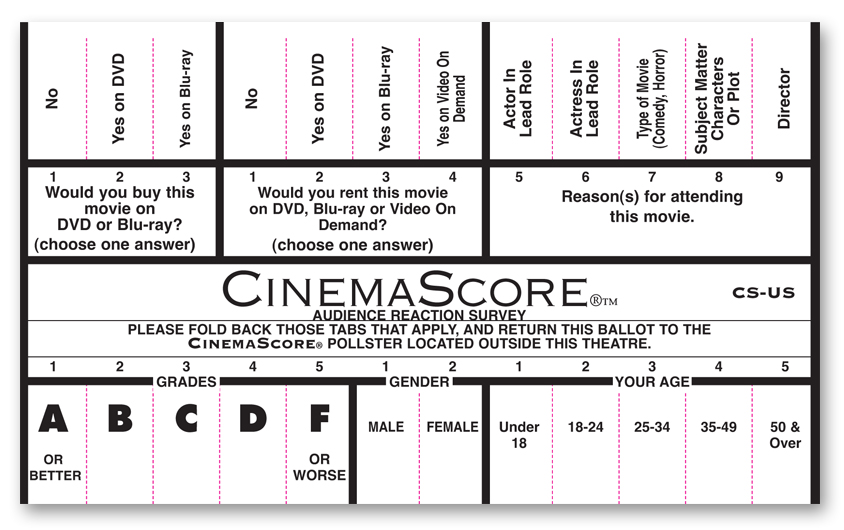

Long story short, it comes from this card, given to audiences on a film’s opening night.

In a “regionally-balanced and statistically robust” variety of theaters around the United States and Canada, audiences are given the above card and asked to mark their immediate reactions before handing them in upon leaving. Scores are then averaged and announced at the end of the film’s opening weekend.

Unlike online aggregate or submission sites, CinemaScore is calculated through a real-world poll. Considering the fact that it is conducted on a film’s opening night, this means that repeat viewings and casual viewers are negligible in the final score. It also means that it is less about a film’s overall quality and more of an underdeveloped initial reaction.

To describe it best, CinemaScore is a tool to measure how well an eager audience is satisfied: this is why most blockbusters can be expected to do reasonably well. If an audience receives what they expect, it is more likely to get a higher score, thus making it important for studios to evaluate the success of marketing campaigns.

The secondary purpose of CinemaScore is to “collect demographic information” making it valuable to studios and media outlets for gauging who exactly is seeing these films.

For more information about CinemaScore, you can visit their website.

Metacritic

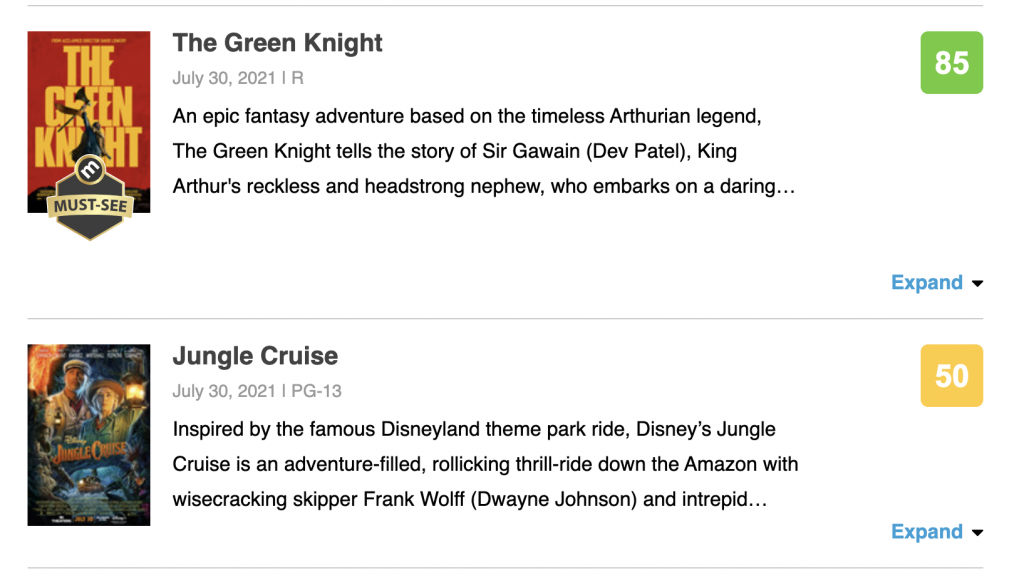

Unlike the aforementioned rating tools, Metacritic hosts grades for games, movies, TV shows, and music, which are all generally calculated the same way. The twist here is that Metacritic uses weighted averages, meaning certain reviews hold more weight in the overall grade than others.

Certain publications and critics are given preference “based on their quality and overall stature.” Prioritizing well-established writers means that smaller outlets and less developed voices are undervalued in calculating a Metascore. Metacritic reevaluates the included publications list several times each year, but does not reveal the weighted order of different critics in their Metascore formula.

Metacritic staffers assign a score of 0-100 to each publication’s review of a certain piece of content, then plug them into the Metascore formula, which is then adjusted according to a normalization calculation. As described on their official FAQ: “Normalization causes the scores to be spread out over a wider range, instead of being clumped together. Generally, higher scores are pushed higher, and lower scores are pushed lower.” Take that as you will.

There’s also the color-coding system! Scores from 0-39 will display as red, 40-60 as yellow, and 61-100 as green.

Films with a Metascore at or above 81 after being reviewed by 15 or more publications are given the Metacritic “Must-See” Award. According to Metacritic, “approximately 5% of movies in Metacritic’s database achieve this elite status.”

Furthermore, normal site visitors’ reviews are not counted in a Metascore. This is a critics-only measure of quality.

For more information on the arbitrary measure that is the Metascore, click here.

IMDb Ratings

Displaying along the top of each registered film’s home page, the IMDb score is among the first that will come up on a quick Google search of a movie title. It is a quick, easy way to gauge whether people like the movie or not, but where does that number come from?

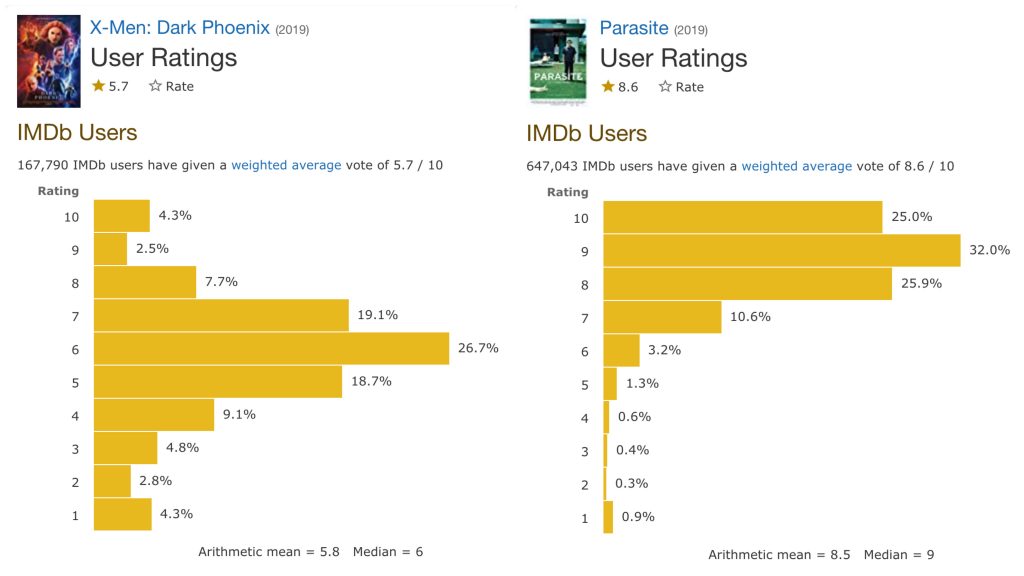

Turns out, it’s not all that complicated; all registered users are allowed to submit reviews for all films and contribute to the IMDb score. That said, votes associated with “suspicious voting activity” may receive a lower weight in the overall average “in order to preserve the reliability” of the overall score.

Since all reviews are attached to registered accounts, IMDb allows the score to be broken down by age, gender, and US or non-US users. This makes for some interesting data, though it’s not as reliable as CinemaScore since it is unverified and not in-person.

You can find the IMDb Ratings FAQ here.